Unfortunately, the Obama administration does not seem to care and the U.S. is retreating into second tier status. Such a degradation is not only completely unnecessary, but needlessly weakens the economic competitiveness of the U.S. and puts our citizens at risk. Amazingly, Congress appropriated the money to address this problem a year and a half ago, but the administration has not made use of the funds. There are words to describe such inaction, but this is a family oriented blog.

Numerical weather prediction (NWP) is the central technology of weather forecasting. State-of-the-art weather prediction demands huge computer resources and thus the ability to forecast well depends on access to the top supercomputers in the world. Some numerical weather prediction models are run globally at moderate resolution, while others are run at ultra high resolution over smaller domains to predict small-scale features such as severe thunderstorms. Thus, a large nation, like the U.S., requires far more computer power than, say, South Korea or the United Kingdom.

During the past several years, I have blogged repeatedly about lack of computer power available to U.S. operational weather prediction, and particularly the forecasts made at the NOAA/NWS Environmental Modeling Center (EMC). Many others in the meteorological community have done the same. One and a half years ago, the U.S. Congress, recognizing the problem, provided NOAA with 25 million dollars to buy a more powerful supercomputer. Amazingly, the U.S. administration has still not ordered the machine.

The reason is that NOAA had signed a long-term contract with IBM (a bad move, by the way) and IBM sold their supercomputer hardware business to Lenovo, a Chinese firm. The administration did not want to purchase such a computer from a Chinese firm. And so nothing has happened.

There were many options that could have fixed the problem. IBM could have purchased a supercomputer from CRAY, a U.S. firm. NOAA could have broken the contract with IBM. Or the administration could have gone ahead with the Lenovo machine (which was the same computer they would have bought anyway). But the Obama administration clearly is not very interested in weather prediction, and the problem has festered.

But it is worse than that. Other nations and groups are pushing ahead rapidly in weather computer acquisition, leaving the National Weather Service in the dust.

Yesterday, CRAY Computer announced the UK Met Office has ordered an extraordinary 125 million dollar system (CRAY's newest XC-40 hardware) that will delivery a throughput of roughly 15 petaflops (a petaflop is one quadrillion operations per second). The current NWS computer is capable of .21 petaflops and they are upgrading this fall to a machine of .8 petaflops. So the UKMET office will have TWENTY TIMES the computer power of the U.S. The area of the US lower 48 states is 33 times larger than that of the UK.

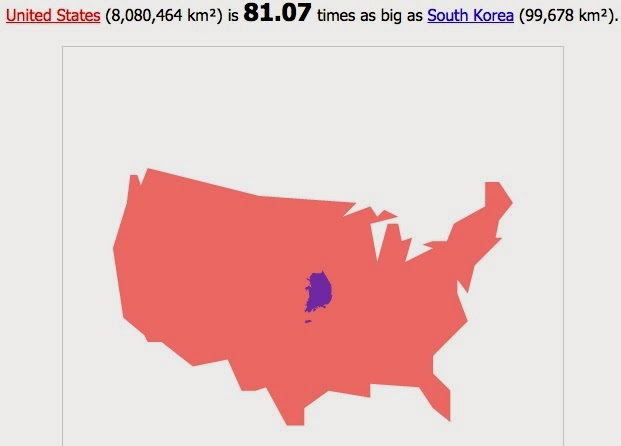

In June, the Korean Meteorological Administration (KMA) purchased TWO CRAY XC-30 computers, each capable of 3.1 petaflops. Yes, their new machines will be nearly FOUR TIMES faster than the UPGRADED U.S. weather computers. Let's see, Korea is 1/81 the size of the lower 48 states.

The European Center For Medium Range Weather Forecasting (ECMWF) just completed their first of several upgrades, buying two XC-30 computers from CRAY, each with 1.8 petaflops capacity--more than twice as fast as the U.S. upgrades. And importantly, ECMWF only does global prediction and thus does not have the responsibilities for high-resolution local forecasting like the National Weather Service. They need far less computer power, yet possess far more than the U.S. operational center.

Heard enough? I have more examples, but the message is clear: the U.S. is rapidly falling behind in thecomputational resources necessary for high quality numerical weather prediction. Sadly, this administration has the funds for a major upgrade, one that would at least secure a petaflop machine capable of revolutionizing U.S. weather prediction, but they can't seem to figure out how to buy it.

I know a lot of people inside NOAA and National Weather Service, including scientists working on the next generation of weather prediction models. Many are frustrated by the lack of computer power--one of them recently complained to me there is not enough computer resource to test promising advances.

My back-of-the envelope-calculation is that the National Weather Service needs a minimum of 20-30 petaflops of computer power to provide the American people with state-of-the science weather prediction that would improve the life of everyone in important ways.

For example, there are several reports by the U.S. National Academy of Sciences and other advisory groups suggesting that the U.S. needs ensembles (many forecasts run simultaneously) run at high resolution (2-3 km grid spacing) to provide better forecasts of thunderstorms, and particularly severe ones. Such ensembles would greatly improve the detailed weather forecasts for smaller-scale features in the rest of the country (Northwest folks, think Puget Sound convergence zone or mountain precipitation). But the NWS simply does not have the computer power to do it. New multi-petaflop machines would make it possible.

U.S. companies fork over millions of dollars a year to the European Center for the best forecasts...that would end with the new computers. And there are so many other critical forecast problems that would be lessened with more computer power, like better hurricane predictions days to a week out.

The U.S. atmospheric sciences community is the intellectual leader in meteorology and weather prediction and many of our research advances are applied overseas, such as at ECMWF and the UKMET office. The American people deserve to take advantage of the research they are paying for, but that can't happen with inferior computers and inferior forecasts. And yes, our forecasts are still inferior, with the NWS unable to match the resolution and data assimilation approaches of its rivals overseas.

Want proof? Here are the latest statistics for global 5-day forecasts at 500 hPa (about 18,000 ft above sea level) for several major international forecasting centers during the past month. Higher (closer to 1) is better. The top group is the European Center (ECM,the red triangle), with an average score of .911. The U.S model (GFS) is nearly always below them and had frequent and disturbing "drop outs" where forecast skill plummeted for a day or so (U.S. average is .876). Second place is the UKMET office (orange circles, .897) and expect them to soar with their new hardware. U.S. forecasters in their weather discussions frequently talk about their dependence on the European Model. Unfortunate.

And we can't simply use the European Center for our weather predictions, since they will never do the high-resolution prediction over the U.S. than we need, among other things. That is the job of the National Weather Service.

So folks, how do we fix this?

First, the Obama administration needs to start taking weather prediction seriously, which they obviously don't. The President's Science Adviser John Holdren seems to be fixated on climate issues and does not appear to appreciate that good weather prediction is a primary means of protecting the American people from current and future extreme weather events. The administration needs to figure out a way to order a large multi-petaflop machine for the National Weather Service, getting past the objections of some bureaucrats about Lenovo computers. Or simply order a CRAY (I had lunch with a CRAY representative and they are enthusiastic about helping).

The President and Science Advisor John Holdren need to give more priority to weather prediction

Second, the American people and the weather community need to complain loudly about the current situation. The media can help us get the message out, something they did to great effect to secure the funding in the Sandy supplement in the first place.

Third, our congressional representatives need to make this a major issue and push the administration to act.

As I have noted in my earlier blog, securing adequate computer resources is only the first step in producing a renaissance in U.S. weather prediction capabilities. But it is a critical and important first step, and it is time to finally deal with this self-inflicted problem. Weather prediction is essential national infrastructure, like highways and education. With second rate infrastructure, a nation declines.

If nothing is done by September 2015, the money for the new weather supercomputer will be lost. It would be a tragedy for U.S. weather prediction and the American people. Let's make sure this does not happen.

Want to sign an online petition supporting improved computers for the National Weather Service? Go to this link!

Time to teach ECMWF some humility

Cliff: All valid points, so please accept this comment in the spirit that it's given.

ReplyDeleteKorea is not 81 times smaller than the U.S. Nothing can be 81 times smaller than something else. Korea is over 98% smaller than the U.S.

Pet peeve of mine; sorry. Keep up the excellent work.

This drives me nuts!!! I hate the fact that as the one major Superpower, we choose to bow down and except mediocrity. I also despise governmental red tape that keeps us from accomplishing great things. If the money is there, cut the BS and let's upgrade and be the best! Not just for the safety of all Americans, but simply because that's who we are as a nation.

ReplyDeleteI just emailed my Rep. and put a link to this particular blog. I feel a little now...

Keep it up Cliff!!!

Why is the weather community so far behind on technology? The rest of the world has moved on to commodity hardware and software (i.e. Amazon AWS, et al and Linux) for their computational needs.

ReplyDeleteThere is no need to spend $125 million. Update your software to work on a cluster of commodity hardware!

Cliff,

ReplyDeleteShould the administration appropriate the funds, how much computer power will the $25M buy? Are we going to see the 20-30 petaflops that you suggest are needed?

Alex.

I agree fully! This reminds me of the beginning of the space race, and I think that this should be looked at as such. Making this a mater of national security and pride should be our priority. Falling behind and failing our Jr. Americans future in meteorology, especially if global warming is going to be a focus in our not so distant future, should make this a priority! Where's Al Gore now when we need a politician to make a point where it is going to count? I think that we'll need a wealthy person in the private sector to get this off the ground.

ReplyDeleteI hope Paul is kidding or we have a seriously uneducated commentary on Cliff's blog. This is the kind of thought that causes the administration to make these errors... remember healthcare.gov? That was an effective (being sarchastic here) technological approach.

ReplyDeleteWe all hope Clint Didier wins. He will take care of this stuff, Cliff. Get behind that dude. Plenty of room....

ReplyDeleteTim, not kidding. Google and Apple use cheap commodity hardware almost exclusively for its operations of its data centers. This is a known fact. They are quite successful at it.

ReplyDeleteI would suggest you educate yourself before calling anyone else uneducated

Paul, Tim and Ohers

ReplyDeleteNumerical weather prediction models are massively parallel (thousands of processors often) and this configuration, with very fast interconnect between nodes is not necessarily what is available at commercial data centers. But perhaps someone reading this in the cloud computing community can tell us more..cliff

I had a poor experience with Amazona AWS kind of system for NWP purposes. AWS is a system kind of created for many many independent operations, or many small users. Such system's architecture is not optimal for parellel computing. Further, the multinode/storage/connectivity fare formula would drain anyone's pockets. I spent all my allowance at the pre-processing stage :(.

ReplyDeleteWhy not start a petition at change.org (I think that's it) demanding the money that was allocated be spent. Obama's policy says they will respond if a certain number of people sign the petition. I'm sure there would be enough to get an official response, as well as to elevate the importance of this purchase.

ReplyDeleteOne problem with being an economic captive is that you have no voice. Obama's administration has no incentive to support newer systems, because nobody who directly cares would ever vote for a Republican at the national level anyhow. Your (Cliff's) vote is theirs irrevocably, so they would rather avoid offending anybody else rather than meeting your needs and those of business owners.

ReplyDeleteWhy "the Obama Administration?" Head of NOAA was only just finally confirmed this year by Congress. After another obstructionist fight by Republicans. The person confirmed was the previous Assistant Secretary Environmental Observation

ReplyDelete& Prediction/Deputy Administrator, so now that position remains Vacant. The President and his Administration can only nominate.

Golly, I hope I'm wrong, but I see some eerie similarities with this and the run up for making the case to privatize the space launch business. Not that there are any guys behind the curtain trying to find the strings to pull a privatization puppet.

ReplyDeleteCliff- Do you know which specific office is responsible for approving the purchase of equipment the Congress appropriated funds for?

ReplyDeleteWhy not use GPGPU machines running commodity Nvidia CUDA graphics cards? That's what we do image processing jobs at UW with.. GPUs are at least a thousand times more powerful than a CPU. And advances make upgrades easy (take out card, put in new card).

ReplyDeleteIf I had that kind of dough I'd buy tons of high end graphics cards which can be easily replaced / upgraded / procured, rather than comit to a particular vendors full hardware solution.

I'm also suprised that this problem isn't tackled by weather enthusists similar to the Seti@home or the protein folding problem. I know arguments ( IMHO, whining) exists to say that problems can't easily divided, but I have to take a step back and say that whilst these people are experts in their field, they haven't thought of the thousands of different possible ways to tackle this issue.

All that said, I fully believe that the corruption level at this scale of spending makes it impossible to proceed and maintain a competitive edge. The best we can hope for is a new generation of coders and thinking about solving this in a transparent, open source, crowd sourced, "gamified" way. Having concerned weather enthusists competing to build distributed modeling and solve packets on idle cycles.

Folding@home is currently running something like 24 petaflops. Most of that is donated computer time.

Cliff,

ReplyDeleteForgive me for editing your text... I did it! I made a petition for us at WhiteHouse.gov (We the People). (Long link) https://petitions.whitehouse.gov/petition/spend-25-million-already-appropriated-supercomputer-increased-weather-prediction-capabilities/jmpKNlSf or (short link) http://wh.gov/icpSx Spend the $25 million already appropriated on a supercomputer for increased weather-prediction capabilities.

Please feel free to share these links far and wide... it takes many signatures for the administration to address the issues raised by these petitions, but it can and does happen!

By the way, it takes 150 signatures for the petition to be publicly searchable on the We the People site. I know that between this blog and my Twitter/Facebook pages we can reach many more than that number, but I hope you will take the time to actually go SIGN the petition. It is simple! Thanks

ReplyDeleteP.S. I've seen some petitions with as few as 2000 signatures get addressed by the administration, but only when they can be lumped in by subject matter with other, bigger petitions (Obama responded to 33 petitions about reducing gun violence in one response, for example; the biggest petition there had almost 200,000 signatures and many of them were over 5000). The smallest single-issue petition I've seen get a formal response was about 5000 signatures.

I agree with DC. Having done GPGPU computing for our small business, and also participated in World Community Grid, Seti@home and Folding@home, the distributed computing power available in this mode is dimply enormous and untapped.

ReplyDeleteCombine that with the capability to "upgrade" the system naturally as different technologies are rolled out to the tech community, this makes a supercomputer one-time purchase seem like a very poor choice.

I appreciate that some of these problems are "easier" to program monolithically. But in this distributed computing enabled world parallel processing, at multiple levels (in one box, in one cluster, in one integrated region, etc.) makes far more sense.

Tim and DC,

ReplyDeleteGPUs, MICs, etc. have been tested extensively for use in numerical weather prediction. So far they have generally been unsuccessful due to technical issues I won't get into here. Eventually, they will be an option, but they are not now. And the SETI approach does not work for weather prediction because of the communication delays. Trust me..this has really been evaluated. Numerical weather prediction needs large numbers of processors/nodes (now thousands) coupled with high bandwidth interconnects..cliff

Cliff, there is lots of precidence of running WRF on Nvidia's Cuda. Cuda has rescaled everything, and it's changing things at a pace that may deserve revisiting by your modeling friends.

ReplyDeleteHere are some inputs if people could get more behind a DIY (not lock in contract) gpgpu model.

The new Tesla k40 GPU from Nvidia (released sept 14) is around 4.2 single precision Tflops per card. They cost (at newegg) around $4400 each. Round up to 5k and assume that leftover goes to motherboard, ram, hard drive and network. About 50 of those ($250,000) puts us at parity with the current US computer.

You can fit ~6 in a comodity case (like ours), so that's about 9 of those, 4U sized computer, which almost fits in a full sized 42U computer rack.

You need a lot of other things like clever humans and buildings and cooling but the scale of these new GPUs is incredible. We had ours built and installed Debian Jessie on it without too much issue.

I know we operate at a totally different scale... But these gpgpu machines have changed our game in post processed SAR and image processing problems. It took code rework but the scale rewards are tremendous.

Sources:

http://m.newegg.com/Product?itemNumber=N82E16814132015&Keyword=nvidia%20k40

http://www.nvidia.com/object/tesla-servers.html

http://www.supermicro.com/products/nfo/gpu.cfm

DC wrote: " Round up to 5k and assume that leftover goes to motherboard, ram, hard drive and network. "

ReplyDeleteWhat systems like the Cray bring to the table are *massively* fast backplanes.

For the type of problem that weather systems are, a "supermicro" or GPU system requires an interconnect like Infiniband... and right there you're near (or beyond) doubling the cost of the per-board basis.

And even then you're below a Cray's "fabric" interconnect scheme. Infiniband's 40 Gbs hookups still have a significant delay (on the order of hundreds of nanoseconds) where a Cray might have 50.

There still are places where "big iron" fills the niche.